Trusted Real-Time Discrimination and Override of Unsanctioned UAV Behavior

Principal Investigator: Dr. Cameron Patterson

As civilians, corporations, and governments embrace autonomous systems for transportation, delivery and national defense, a critical open problem is ensuring that these systems can be trusted to correctly and safely complete their missions in shared, complex, dynamic, and even cyber-contested environments. There are no formal guarantees for machine learning algorithms, which may respond arbitrarily when encountering situations not covered in the training data, a risk highlighted by recent trials of self-driving cars. And while automated systems are ubiquitous, they are often deployed with human guidance. For example, in aviation, highly trained pilots provide programming and oversight for largely automated commercial aircraft, and serve as backup should the automation fail. A similar degree of assurance will be necessary for the FAA to grant more than temporary waivers for drones to fly beyond an operator's visual line-of-sight, and for drone activities to move beyond the test environment in general.

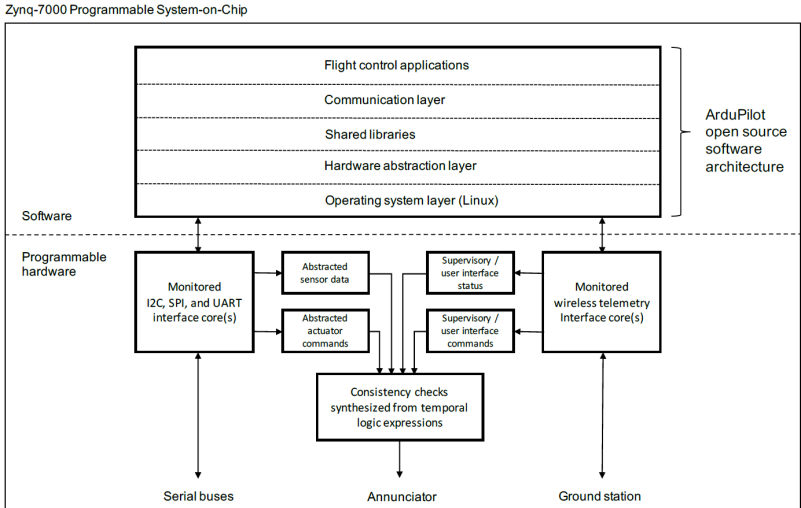

Flight control monitors synthesized from formal specifications can provide mathematical guarantees of correctness for aspects of navigation, sense and avoid, and contingency management. Contingency management is a particularly urgent issue; UAS must be resilient to malware and rogue operators, with oversight and possible override of UAS behavior provided by formally verified, hardware-implemented systems. Hardware is harder to compromise than software but easier to analyze. System inputs (sensor data and supervisory commands) and outputs (actuator commands and status) can be efficiently monitored with configurable hardware synthesized from linear temporal logic formulas and formally analyzed with model checking. The monitors are also partitioned from complex or untrusted components, and their isolated FPGA implementation allows them to avoid common mode failures or malicious interference. With this type of setup, hardware monitors can essentially serve as a trustworthy replacement for human oversight.